How MIT's Modular-Things is Redefining Hardware Design Through Virtualization

Insights | 25-05-2023 | By Robin Mitchell

Recently, a collaboration between MIT researchers and Hack Club developed a new toolset that helps to virtualise projects by adding microcontrollers to most system components, thereby allowing for the development of complex projects. What challenges do monolithic designs face, what did the collaboration develop, and why could it be the future of hardware design?

What challenges do monolithic designs face?

Just about all modern electronic systems manufactured today are monolithic, meaning that they are designed entirely as a singular unit that can operate independently. Of course, there are plenty of devices out there that require connections to other devices, such as LAN and USB, but fundamentally, each part has its own PCB containing all the parts it needs to function.

This type of development has worked well since the founding of the electronics industry, as it couples well with standard manufacturing processes, allows for designs to be shrunk down in size considerably, and can see individual teams develop their own complete products and solutions while having minimal reliance on others.

As great as monolithic designs have, there are some major drawbacks that come with them. One such example of where monolithic designs fail is datacentres consisting of thousands of server racks, ethernet cables, and hard drives. It is perfectly possible to create a datacentre as a singular computational unit, not so dissimilar to the Cray I supercomputer, and such a design would be significantly smaller than the datacentres currently in operation.

However, such a monolithic design will have millions of individual components, all of which carry a risk of failing. A singular failure in the design can result in the failure of the entire system, and trying to identify the damaged part can be a monumental task. By having datacentres as modular parts that are effectively individual functional units, it is easy to spot which systems have failed, and the use of racks allows for those units to be quickly swapped out. The same applies to hard drives; by having multiple hard drives in each server rack, faulty units can be identified and replaced while having minimal impact on the overall system performance.

The same applies to the software that runs a data centre. While it is perfectly possible for a single monolithic software system to run every single task on a single machine, utilising modern operating systems that separate individual functions from the core system allows for software modularity whereby new functions can be added and old ones removed without having to make changes to the underlying software infrastructure.

MIT researchers develop modularised virtual hardware

Recognising the advantages of modularised designs, researchers from MIT, in collaboration with Hack Club, have recently developed a new modular hardware toolchain that allows for complex projects to be built on top of modular hardware.

As previously stated, traditional projects will typically be built on top of a monolithic design, and the software running on this will also reside on one specific device. But while this allows for efficiency in both space and resources, it can lead to numerous challenges, including system complexity, reliability, and even e-waste (as projects can rarely be reused and repurposed with ease).

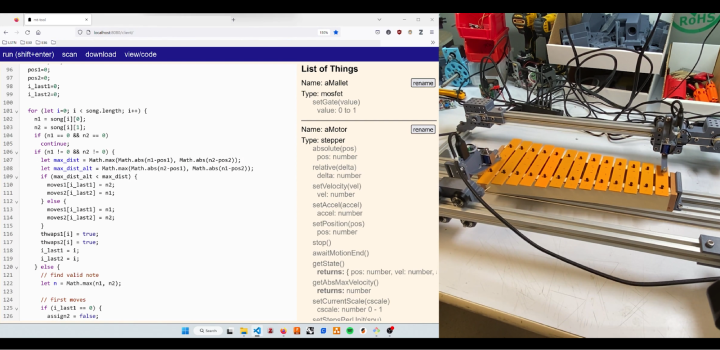

The new system developed by MIT is a two-pronged solution that modularises key devices in a project and provides a toolchain that sits on top of the entirety of the project as a virtualised hardware system. Individual pieces of hardware, such as buttons, LEDs, and stepper motors, have their own individual controller, and these controllers specifically deal with the hardware to make sure it is driven correctly while also providing advanced features for that hardware. Then, this hardware is connected together in a singular project network that allows all of the connected devices to communicate with a larger software environment.

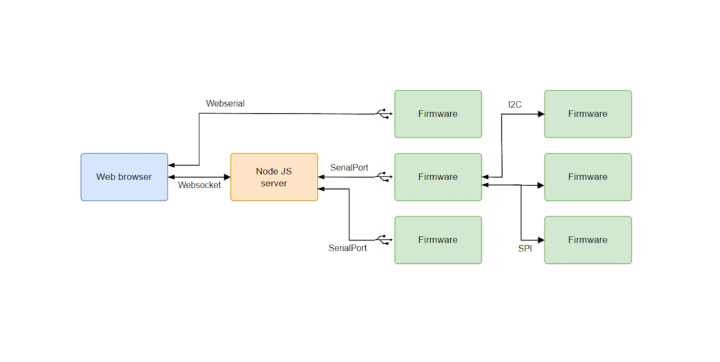

The researchers noted that this setup is similar to UNIX, whereby each module in a project acts as its own service independent from the greater operating system. By doing so, complex systems can be created that become agnostic of the underlying hardware. According to the research conducted by MIT and Hack Club, their tools consist of a set of single-purpose embedded devices and a link layer agnostic message-passing system for communication between devices. They have also developed a web-based programming environment to facilitate the programming of the virtualised software objects.[1]

The framework that controls such projects is separated into two layers, with the first being based on the Arduino Library and allowing for module discovery and recognition, and the second layer is a web-based IDE that allows for programming via JS. It should also be noted that the project itself is executed in-browser, meaning that the project logic is executed in a “virtual space” [1].

Could modular designs be the future of electronics?

Currently, the vast majority of electronic products are monolithic, and this primarily comes down to the numerous benefits that such an architecture has; it allows products to be more easily manufactured, reduces system costs, and even helps to encourage repeated sales as devices degrade and require replacement. However, the rise of open-source software, the eventual rise in open-source hardware, and the need to reduce e-waste could all encourage engineers to move towards more modularised designs.

Undoubtedly, the concept developed by the MIT researchers is more expensive than a traditional design, but it clearly has some major advantages. For example, complex projects can be made simpler, and the use of a web-based interface allows for projects to be changed on-the-fly without the need for programmers. At the same time, it also reduces the need to interact with firmware, separating the functionality of a project from its low-lying routines.

What the researchers have developed certainly presents some exciting opportunities for engineers and could even present a more environmentally friendly method for developing future projects.

Reference

- Modular-Things: Plug-and-Play with Virtualized Hardware": Read, J.R., Mcelroy, L., Bolsee, Q., Smith, B., & Gershenfeld, N. (2023). https://dl.acm.org/doi/10.1145/3544549.3585642