How Will AR Make Us Safer Drivers?

| 10-03-2020 | By Philip Spurgeon

Augmented reality has been knocking around for a while now. Yet, despite the versatility of the technology it hasn’t been adopted as widely as one might expect. Beyond Pokemon Go! of course. Some retailers have developed apps that allow you to ‘try before you buy’. So shoppers can see themselves in an outfit or size up a sofa for the living room.

Zara has launched an augmented reality app to boost customer engagement.

Architects are waking up to the idea of AR in order to present what their designs could look like. In addition, it can also help architects and contractors to identify any potential problems before they happen. Augmented reality also represents a genuinely exciting education opportunity. While some schools have embraced using virtual reality, AR has huge potential without the price tag. AR can present classrooms with 3D models that the children can interact with. These interactions can present additional information providing them with a deeper learning experience. On a simpler level, AR can also help children learn other languages as environments can be tagged with the correct terms and how to pronounce them. So the augmented reality market is growing. But one industry that possibly stands to gain the most automotive. With modern cars akin to computers on wheels, now seems like the time to augment things a little…

How does Augmented Reality Work?

Anyone who has used Snapchat filters or the aforementioned Pokemon Go! has experienced augmented reality. The concept of augmented reality is to superimpose graphics, sound, haptic feedback and other sensory enhancements over the real-world. In real-time. This can be in the form of a smartphone or a smart lense built into the headgear. AR works by using computer vision, simultaneous localisation, mapping and depth tracking data. The device then processes that data in order to show digital content relevant to what the user is looking at. In other words, the device pulls together contextual data, based on specific parameters in order to provide relevant and timely information to the user. The contextual element is key. Augmented reality programmes are far closer to machine learning than artificial intelligence. In so far as it serves you with the information whether you like it or not. So a shopping app could tell a soldier which store front they were hiding in but won’t tell him what his enemy is armed with. In other words, while there is an app for that, it is very specifically for ‘that’. This apparent limitation is also one of augmented reality’s strengths as it can leverage almost any form of data to provide the user with information that would otherwise be unavailable. Again, to use the soldier as an example. Smart armour, with an augmented reality enhanced helmet, can pull information from the environment, their fellow soldiers and a data link with command.

Ghost Recon

This would provide a tactical overlay indicating enemy positions, weapons signatures, the condition of the unit as well as environmental factors like heat, humidity, wind speed etc. Such technology would dramatically increase combat effectiveness while decreasing risk. Combined with smart fabrics or thermoelectric materials and both soldiers on the ground and command can monitor troop welfare far more closely. While it’s safe to say augmented reality will undoubtedly make it into combat there is one market that is yet to fully exploit augmented reality. And it can do it in a big way.

What can AR do for Cars?

Roads are busier than ever. Experts predict there will be 2 billion cars on the world’s roads by 2035. That means lots of traffic. It also means lots of obstructions and hazards. This makes it more important than ever for drivers to have their attention firmly on the road. Using a combination of sensors and augmented reality, drivers can have crucial information presented on the windscreen. Using current technology, manufacturers could present drivers with speed, revs, fuel and even the current radio station. In fact, manufacturers like BMW, Jaguar, Mazda and Toyota do exactly that. They can even offer lane guidance. That’s handy for sure. It’ll also help to keep the driver’s attention on the road rather than fiddling with buttons on the centre console.

However, combining GPS, motion sensors, and cameras with augmented reality is where things get interesting. Motion sensors and cameras can be programmed to detect traffic slowing. Combined with GPS telemetry, the car can warn the driver of congestion up ahead. This gives the driver plenty of time to both slow down and - where possible - find an alternative route. Significantly, it could also identify and warn drivers of pedestrians walking behind a park car. This gives them time to slow down, in case the pedestrian steps out onto the road. The same technology can also warn drivers if their car is too close to another car - be they moving or parked. Or provide a camera feed of blind spots to avoid collisions and make manoeuvring easier. Indeed there’s nothing stopping manufacturers from replacing the rearview mirror with a camera feed projected onto the windscreen.

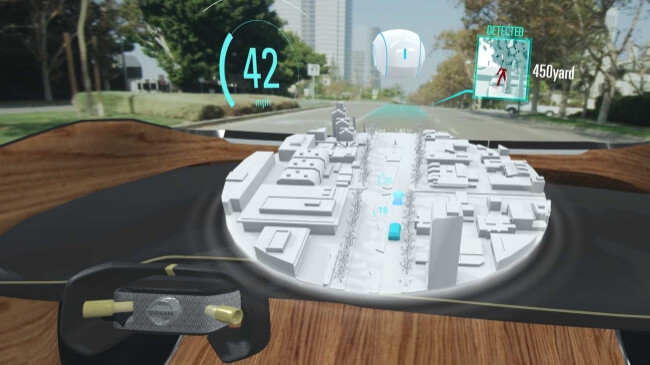

On a more basic level augmented reality can highlight road markings, traffic lights, crossings etc. This is especially useful if road markings are worn, markings are obscured by adverse weather conditions or you’re driving at night. Or all of the above. Combined with lane guidance technology, it is easy to see how road users can benefit. On a more personal level, lacing car seats with thread-based transistors could capture information about those on board. Drivers can be warned of hydration levels, or even comfort levels, prompting them to stop for a rest. The car could even use internal cameras to detect if the driver is tired (or drunk) based on the amount of attention they are paying to the road. The car could either prompt the driver to pull over and rest or take control of the car and find somewhere safe to stop. Considering 1 in 6 incidents on UK roads were related to fatigue, combining technologies in this way could save a lot of lives. The technology can also help to make autonomous cars safer too. One of the big problems with autonomous cars is that they are only as good as their programming. They lack the experience and human intuition sometimes required to unstick a sticky situation. Therefore, an augmented reality system that continuously updates occupants with information - such as developing hazards or a car driving erratically - allows the driver to make an informed decision about whether to intervene. Car manufacturer Nissan has taken this one step further with its Invisible-to-Visible innovation. Invisible-to-Visible (I2V) uses a 3D, augmented reality interface that consolidates data from what it has dubbed the Metaverse.

Sadly nothing to do with superheroes, the Metaverse is a virtual world comprised of sensor data, cloud data and artificial intelligence. I2V will be able to understand this ‘invisible’ information and make it visible to the driver through augmented reality. The AR co-pilot will respond to voice commands as well as provide you with relevant information unprompted. Nissan is hoping to release a version of I2V by 2025 by which time, they hope, drivers will have the option of handing the controls over to the co-pilot. This allows them to take a break in order to avoid driving tired. Nissan believes ‘Invisible-to-Visible creates limitless possibilities for services and communications that will make driving more convenient, comfortable, and exciting.’ Unless the co-pilot is Maverick from Top Gun, exciting might be a stretch.

You can learn more about I2V here: