Nvidia announced its latest open platform called Omniverse

| 12-11-2021 | By Sam Brown

Recently, Nvidia announced its latest open platform called Omniverse, allowing for virtual collaboration and accurate real-time simulations for training. What challenges does modern AI face, what exactly does the Omniverse platform do, and how does Omniverse demonstrate the growing importance of digital twins?

Why does AI face challenges in training?

AI is a technology that seems to have grown at an unprecedented rate for the past decade. The start of 2010 saw AI develop in a few niche projects that showed potential, but fast forward to 2021, and AI is almost everywhere. The refinement of algorithms and dedicated hardware sees AI tasks run efficiently and effectively, and now even the simplest of devices can have access to AI in some form.

AI has been so successful thanks to how AI can process data, discover patterns, and then interpret new data that has never been seen. Traditional algorithms essentially boil down a problem into a series of if statements that check for conditions. While this is fine for operating systems, terminal programs, and games, it is inappropriate for environments that can change drastically while still containing similar elements.

For example, trying to hardcode a self-driving car is virtually impossible due to the many different road designs, landscapes, and drivers. Instead, AI can look at these different situations and try to infer what it is seeing, categorise things of interest, and then react based on past experience. This learning process does not involve coding, but instead vast amounts of data with what that data contains (similar to a question-and-answer sheet).

However, self-driving cars are struggling to train AI as self-driving cars require experience on the road, which they are often not allowed to do. Furthermore, the many things that can happen on the road, most of which are unexpected, is challenging to produce data for. AI systems are often being held back due to the lack of appropriate data to train from.

Nvidia announces Omniverse platform

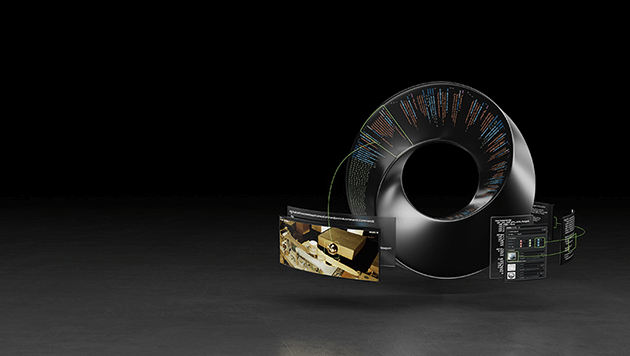

Recently, Nvidia announced the development of a new open platform called Omniverse that has been stated to be the solution for testing future AI systems. Essentially, Omniverse is a software platform that combines Nvidia GPU technology with Pixar’s Universal Scene Description to create digital environments that can be used to train AI.

The platform itself has been designed to be extensible and modular so that frameworks can be developed with ease that perfectly matches the application they are being used in. Furthermore, large portions of the platform have been designed with low and no code in mind using Python to accelerate projects’ implementation.

One technology of particular interest is Nvidia Drive Sim which creates a simulated world environment for training self-driving systems. The simulated environment is physically accurate, incorporating multi-sensor simulations in rich 3D environments, all of which are in real-time.

Omniverse Replicator is another tool in the Omniverse platform that helps produce physically accurate synthetic data to feed real-world AI algorithms to learn from. The Omniverse platform also integrates other tools such as AI assistants, which can use Nvidia technology for speech synthesis and analysis.

How digital twins may be the future of AI training

Training AI can be challenging when large amounts of data is required, but the advent of digital twins could solve this. Creating AI is difficult but creating simulations with random events and realistic physical actions is surprisingly easy. This is already widely done in video games which utilise physics engines to create realistic object interaction and simulate the properties of matter (such as fluids).

As such, it is possible that a real-world simulation could be made accurate enough for AI systems to learn from. Such a simulation, often referred to as a digital twin, can be run many millions of times using different initial conditions that could help expose an AI to unusual situations. This would ensure that the AI can respond appropriately no matter what happens when in practice.

However, caution should be practised when using digital twins as real-life is often more unpredictable than we would like. Just because a self-driving AI has 10 million miles of simulated road experience does not mean it is anywhere near ready for use on real roads.