Low Power Photonic Chip Uses Light Instead of Electricity

| 24-06-2019 | By Rob Coppinger

Optical neural networks could operate 10 million times more efficiently than their conventional electrical counterparts with a new photonic accelerator chip, according to simulations.

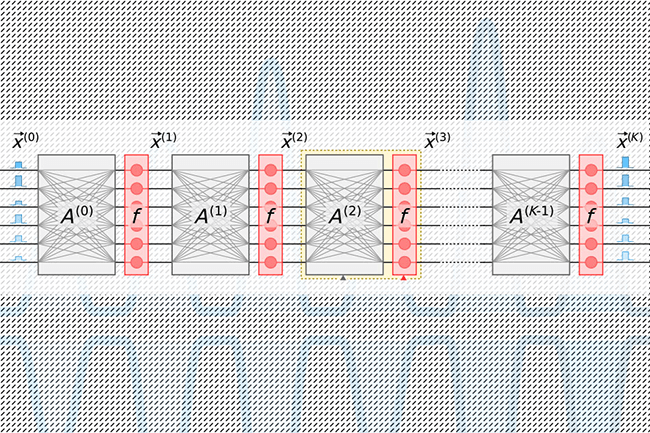

The photonic accelerator chip, designed by researchers at the Massachusetts Institute of Technology (MIT), uses a method for encoding data using optical signals, which its developers claim is more compact and energy-efficient. The electronics for neural networks come in two types. The conventional, which uses microchips found in any computer today, and the optical optoelectronic semiconductor type, which is faster. Neural networks are machine-learning models used for object identification, natural language processing, drug development, medical imaging and driverless cars.

Despite an optical network’s greater speed, it shares two drawbacks with conventional microchip neural networks; as they grow in size, they consume large quantities of power, and secondly, the accelerator chips they use have theoretical minimum energy consumption levels below which they will not work, according to MIT staff. The low-power MIT photonic chip would be used with the optoelectronic systems as it is said to perform orders of magnitude more efficiently.

“There’s a growing demand for data centres for running large neural networks, and it’s becoming increasingly computationally intractable as the demand grows,” says MIT Research Laboratory of Electronics’ (RLE) graduate student, Alexander Sludds. The aim is “to meet computational demand with neural network hardware … to address the bottleneck of energy consumption and latency.”

A new photonic chip design drastically reduces the energy needed to compute with light, with simulations suggesting it could run optical neural networks 10 million times more efficiently than its electrical counterparts. Credit: MIT

More compact

Those simulations of the MIT photonic chip have indicated that this accelerator could potentially process neural networks more than 10 million times below the energy-consumption limit of conventional microchip accelerators, and about 1,000 times below the existing photonic accelerators energy levels. Another barrier to the use of photonic chips to-date has been their bulky optical components.

That bulk means that the chip’s optical components that act as input neurons in the network can number no more than about 100 on the silicon substrate; without a substantial increase in the microchip’s size. That limit of 100 means that large-scale neural networking is impossible. The MIT photonic accelerator chip uses more compact optical components and optical signal-processing techniques, to substantially reduce the size of the chip and its power consumption. As such it is expected to be used for large-scale neural networking.

The researchers found with their simulations that their photonic accelerator could operate with sub-attojoule efficiency. An attojoule is one joule to the power of minus 18. Conventional accelerators perform with energy levels of picojoules, or one-trillionth of a joule. An attojoule is a million times less energetic than a picojoule.

Sludds’ colleagues working on the photonic accelerator chip include RLE postdoctoral researcher Ryan Hamerly, RLE graduate student Liane Bernstein, MIT physics professor Marin Soljacic and head of the Quantum Photonics Laboratory and MIT associate professor of electrical engineering and computer science, Dirk Englund.